Predicting Positive vs Negative Movie Reviews

This is a step-by-step walk through of sentiment analysis on IMDb movie reviews. The goal is to develop[ a model that accurately predicts whether a reciew is positive or negative by uncovering laanguage patterns that drive sentiment. This is a Text Classification task.

The Data

The dataset used consists of 50,000 film reviews scraped from IMDb that are labelled as either positive or negative. It can be found on Kaggle The goal is to develop an optimal model that accurately predicts sentiment and identifies patterns in review content.

Data Processing

We cleaned the text by:

- Removing special characters, URLs, and HTML tags

- Converting text to lowercase

- Removing stopwords using NLTK

- Tokenizing text into unigrams, bigrams, and trigrams

- Adding

STARTandSTOPmarkers to preserve context

These steps reduced noise and made the linguistic structure suitable for modeling.

Exploratory Data Analysis

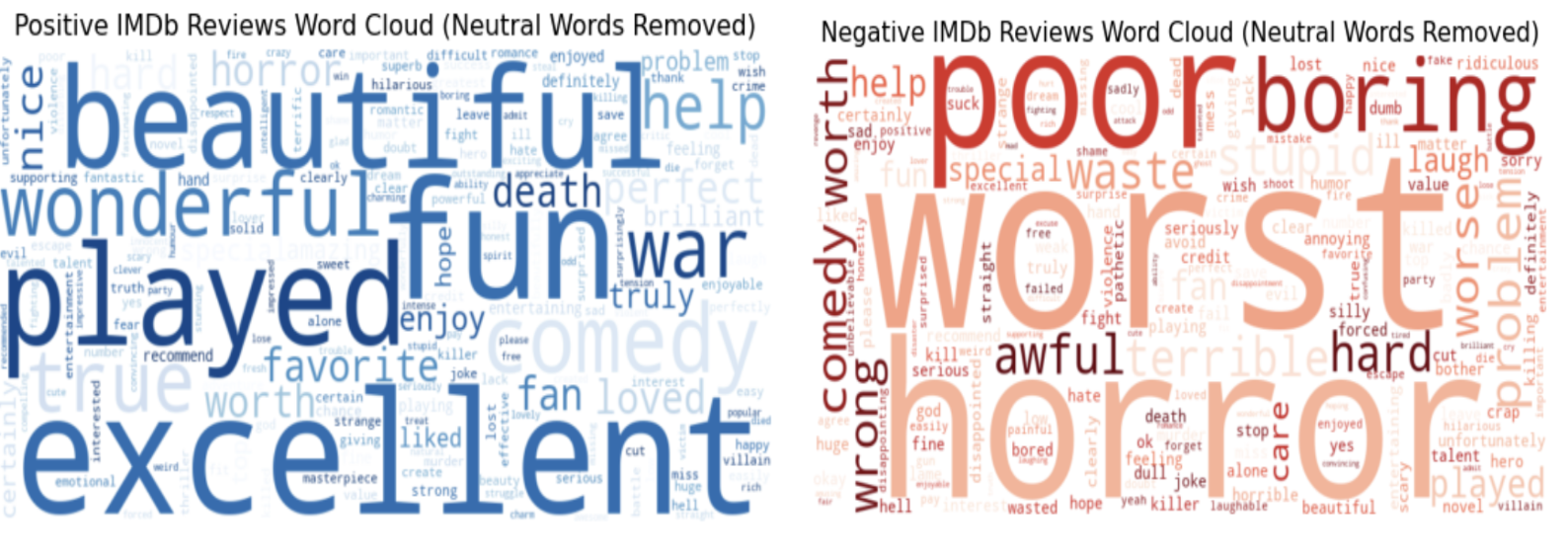

Before modeling, we visualized word frequency patterns.

Positive reviews frequently used terms like “excellent” and “fun,” while negative reviews often used “worst” and “poor.”

These clear distinctions highlighted the potential of text-based sentiment modeling.

Methodology

In order to capture relationships between text, we need to transform it into embeddings. Embedding are numerical representations of words, words with similar meaning will have similar representations.

There are different types of text embedding methods, some more complex than others. This schematic captures the 3 general categories.

We evaluated the following:

- Bag of Words (BoW)

- TF-IDF

- GloVe

- BERT embeddings

Each embedding was used across several machine learning models: Logistic Regression, K-Nearest Neighbors (KNN), Random Forest, a Deep Neural Network (DNN), and a fine-tuned BERT Transformer model.

Logistic Regression

The TF-IDF Logistic Regression model performed best, achieving 89.83% accuracy and AUC = 0.96, followed closely by BoW (89.4% accuracy).

Regularization experiments showed that L2 penalties (Ridge regression) were consistently optimal, suggesting that allowing all features to contribute led to more accurate predictions.

K-Nearest Neighbors

KNN models were optimized through grid search for distance metrics and weighting schemes.

The best-performing configuration used TF-IDF embeddings with distance-based weighting, achieving 79.92% accuracy and AUC = 0.88.

Performance decreased significantly for GloVe embeddings (54.66% accuracy), indicating poorer feature representation.

Random Forest

Random Forest models captured complex word interactions.

With TF-IDF embeddings, accuracy reached 84.02%, while BERT embeddings followed closely at 83.62%.

BoW and GloVe lagged behind due to their limited ability to represent nuanced sentiment context.

Deep Neural Network (DNN)

A 3-layer DNN trained with TF-IDF embeddings achieved 87.23% accuracy and AUC = 0.95, outperforming simpler models but not BERT.

The model used dropout regularization and ReLU activations to prevent overfitting.

Fine-tuned BERT Transformer

The pre-trained BERT model achieved the highest overall performance, with 91.74% accuracy and AUC = 0.97.

Fine-tuning for two epochs with a learning rate of 2e-5 and batch size of 16 yielded highly contextual sentiment understanding.

Results Summary

| Model | Embedding | Accuracy | AUC |

|---|---|---|---|

| Logistic Regression | TF-IDF | 89.83% | 0.96 |

| KNN | TF-IDF | 79.92% | 0.88 |

| Random Forest | TF-IDF | 84.02% | 0.91 |

| DNN | TF-IDF | 87.23% | 0.95 |

| BERT Transformer | BERT | 91.74% | 0.97 |

TF-IDF consistently yielded the most reliable results across traditional models, while BERT surpassed them with its ability to capture deeper contextual meaning.

Conclusion & Future Work

This analysis demonstrates that while traditional models like Logistic Regression and DNN perform well with TF-IDF features, contextual embeddings like BERT significantly enhance accuracy for nuanced text.

Future improvements may include:

- Comparing performance with XLNet or GPT-based models

- Data augmentation via synonym replacement or paraphrasing

- Combining ensemble models to enhance robustness

Overall, the project highlights how effective feature representation is central to sentiment prediction performance.

Repository: GitHub Project Link Dataset: IMDb 50K Movie Reviews